What is the Google Sandbox Effect in SEO?

The so-called Google Sandbox effect is an unofficial status in SEO that describes new websites or articles that have not yet been fully understood, tested, and indexed by Google. Google is placing more and more value on high-quality content.

Since an algorithm update in Google’s search results in 2021, even more value has been placed on the so-called E-A-T (Expertise, Authoritativeness, and Trustworthiness). Google thus wants to ensure quality.

New websites must first establish themselves and prove to be a better resource for the search results than previously existing content.

Why is the Google Sandbox bad for publishers but good for the Internet?

Especially new websites are affected by Google’s sandbox and find out that their created content is not indexed by Google at all and therefore does not show up in the SERPs (Search Engine Result Pages).

For publishers, this means waiting and seeing resulting in lower website traffic and possibly lower earnings. However, it is important to understand that this is not a punishment by Google, but a special effect in the Google algorithm to ensure quality.

Therefore, the sandbox filter helps to improve the Internet, as not every new content is seen immediately. It represents a certain hurdle. Accordingly, it can be assumed that the results of a search query reflect a certain quality.

How can I check if my site is in the Google Sandbox?

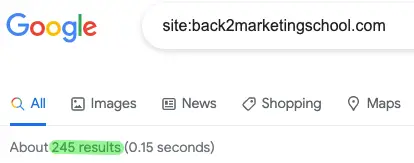

There are several ways to find out how many and which of your pages have already been indexed by Google. First, you can do a Google search with “site:yourdomain.com”. This will return all pages found by Google on your domain:

By the way, this is a good trick to see how many pages your competition has!

However, the better option for your own site is Google Search Console.

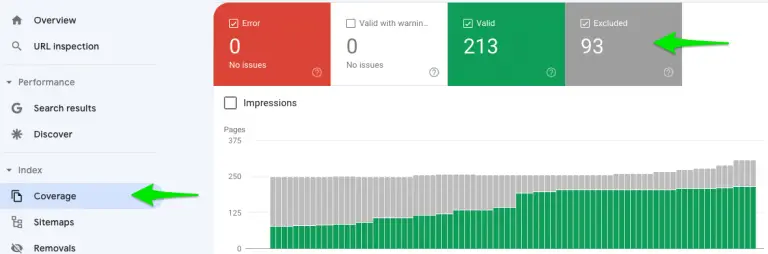

In Google Search Console you can see the indexation of each page:

Search Console -> Index -> Coverage -> Excluded.

Excluded pages are divided into different categories:

- Crawled – currently not indexed

- Excluded by “noindex” tag

- Not found (404)

- Discovered – currently not indexed

- Page with redirect

- Blocked due to other 4xx issue

- Alternative page with proper canonical tag

A detailed listing of the affected page per category can be viewed by clicking on the respective category.

First of all, you should check if the pages that you want to have indexed are excluded by a 4xx code, noindex tag, canonical tag, or a 301 redirect. You should then fix this issue as soon as possible to achieve a positive effect on Google rankings.

It is not a problem if pages are deliberately excluded.

Just read on here how you can prevent and solve the other problems in the best possible way.

What is the difference between Crawled – currently not indexed and Discovered – currently not indexed in GSC?

Google’s approach to covering (indexing) a website is as follows: First, the site is scanned. During this process, all pages are scanned and recognized. Initially, these are marked as “discovered”, but not necessarily fully crawled. Crawling in this case means trying to understand the page completely. This requires more performance than just recognizing a page.

If pages have been crawled but not yet indexed, Google has deliberately decided not to include them in the index yet.

How long is a new website in the Google Sandbox?

Unfortunately, there is no universal answer to the question of how long a new website is affected by Google’s sandbox effect. As a rule of thumb, it can last 3, 6, or even 9 months. If you want to launch a new website, you should definitely plan for 6 months, and expect real results only after 12 months.

It also depends on some factors. For example, how much content has been published, how well is the site structured, are there external signals, or even the keyword difficulty of the focus keywords.

How do I get out of Google’s sandbox?

The fastest way to escape Google’s sandbox effect is to make it easier for Google to fully understand the page and be an authority in your niche. Sounds quite simple at first, but how do you achieve that? Basically, we are talking about search engine optimization SEO best practices:

Healthy on-site SEO foundation without technical errors. This not only helps with crawling the site by search engines but also with usability when your site is visited.

Quality content that is simply better than previous search results for the focus keyword. This applies to product and service pages, but also to informative content like blog posts.

Often only 5 pages are not enough. The more content you publish, the more opportunities you give Google to understand the page, and the easier it is to build topical authority. I myself usually start with 10 – 30 pages for each website I build.

Internal linking connected the above points by cross-linking between content on your page. This helps not only in understanding the page for a particular topic (topical relevance and authority) but also in finding new pages. Internal links help search engine crawlers discover new pages, as they follow every link on a page. At the same time, internal links signal the importance of the page. In short: a page with many internal links seems to be important.

In Google Search Console you can even see the internal links of currently non-indexed pages.

External signals are also very important and help to get out of the sandbox effect. Social Media signals are a good medium to show Google that the content is liked by real people.

Whitehat backlinks are also a great way to show authority. External links to your site are scored like an up-vote. It helps one to reflect authority, but also at the same time – similar to internal links – to be crawled. If Google’s crawler comes across your page via a backlink, this is a good recognition feature.

In addition, the sitemap should be submitted to Google. Especially when launching a page or after major adjustments.

But what can I do if I’m still stuck in the Google sandbox effect despite my SEO efforts?

Indexing tools to escape Google’s sandbox effect

There are some tools and approaches that can help you with indexation problems.

Request manual indexing

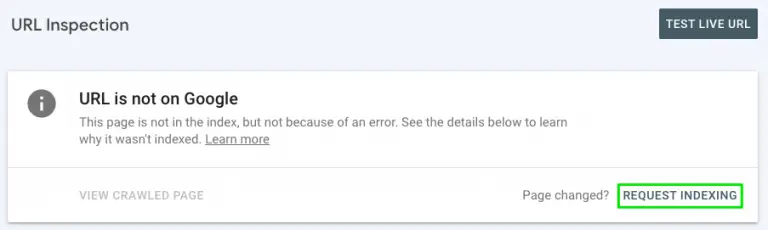

If you have a small number of pages that have not been indexed, you can request manual indexing. The indexation can be requested under URL check in Google Search Console:

The number of daily requests is limited to about 10 pages per day and should not be used too often. Especially for already submitted pages, this should not be repeated frequently (preferably not at all).

RankMath WordPress PlugIn – Instant Indexing

RankMath is a very good SEO PlugIn for WordPress. There is the function via an index API to send pages for instant indexing. BUT this is NOT for Google but Bing.

Google also has an API integration for instant indexing. However, this must only be used for certain content types such as job listings or news articles.

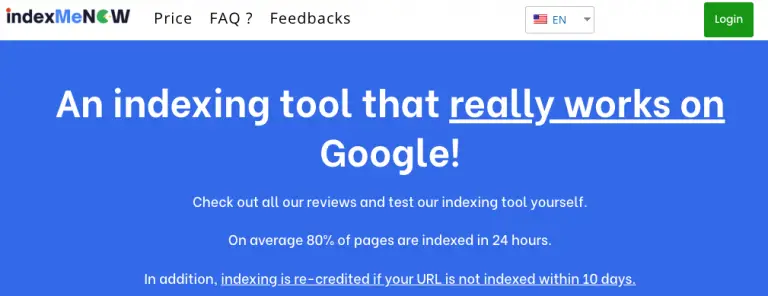

IndexMeNow Bulk Index Service

IndexMeNow is a bulk indexing tool that indexes your non-indexed pages on Google within 10 days. If your ordered pages have not been indexed successfully within the 10 days limit, you will get the credits for these pages refunded.

I have used this service myself and it worked great.

If you want to try IndexMeNow to get out of Google’s sandbox you can use my coupon code B2MS10 to save 10%.

Compound Effect of Indexed Pages

Having more pages indexed results in a compound effect. The more pages Google has listed and crawls, the easier and quicker it picks up new pages and changes made to existing pages.

While you also shouldn’t have any unnecessary pages on your site to save crawling space, each valuable indexed page might help to discover and understand a new page. Internal links (yet again) help the search engine spiders to crawl your site more effectively.

The topical authority also usually deepens with more content on the site. Once you are out of Google’s sandbox and have more content on your blog or website, you’ll find that new pages get indexed way quicker than before.

Sascha is a Lifecycle Marketing Consultant with over 8 years of digital marketing experiences in Silicon Valley, the UK, and Germany.

After leading the demand generation for a 100+ million company, he decided to venture out on himself. He’s now helping clients to attract and convert more leads and customers.

His main focus are SEO, paid media & marketing automation – all with the focus to tie marketing campaigns to revenue.

Sascha has been featured in industry publications.